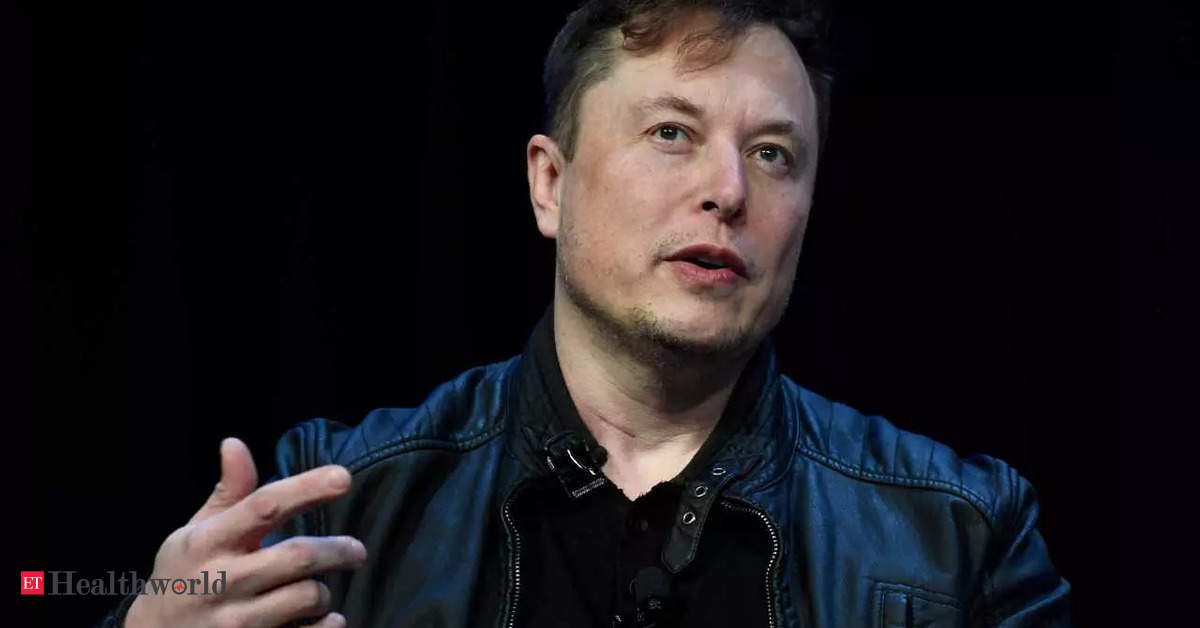

London: Four synthetic intelligence experts have expressed concern after their work was cited in an open letter – co-signed by Elon Musk – demanding an pressing pause in analysis.

The letter, dated March 22 and with greater than 1,800 signatures by Friday, referred to as for a six-month circuit-breaker within the growth of methods “extra highly effective” than Microsoft-backed OpenAI’s new GPT-4, which may maintain human-like dialog, compose songs and summarise prolonged paperwork.

Since GPT-4’s predecessor ChatGPT was launched final 12 months, rival firms have rushed to launch related merchandise.

The open letter says AI methods with “human-competitive intelligence” pose profound dangers to humanity, citing 12 items of analysis from experts together with college teachers in addition to present and former workers of OpenAI, Google and its subsidiary DeepMind.

Civil society teams within the US and EU have since pressed lawmakers to rein in OpenAI’s analysis. OpenAI didn’t instantly reply to requests for remark.

Critics have accused the Future of Life Institute (FLI), the organisation behind the letter which is primarily funded by the Musk Foundation, of prioritising imagined apocalyptic situations over extra fast considerations about AI, equivalent to racist or sexist biases.

Among the analysis cited was “On the Dangers of Stochastic Parrots”, a paper co-authored by Margaret Mitchell, who beforehand oversaw moral AI analysis at Google. Mitchell, now chief moral scientist at AI agency Hugging Face, criticised the letter, telling Reuters it was unclear what counted as “extra highly effective than GPT4”.

“By treating lots of questionable concepts as a given, the letter asserts a set of priorities and a story on AI that advantages the supporters of FLI,” she mentioned. “Ignoring lively harms proper now could be a privilege that a few of us haven’t got.”

Mitchell and her co-authors — Timnit Gebru, Emily M. Bender, and Angelina McMillan-Major — subsequently revealed a response to the letter, accusing its authors of “fearmongering and AI hype”.

“It is harmful to distract ourselves with a fantasized AI-enabled utopia or apocalypse which guarantees both a ‘flourishing’ or ‘probably catastrophic’ future,” they wrote.

“Accountability correctly lies not with the artefacts however with their builders.” FLI president Max Tegmark advised Reuters the campaign was not an try and hinder OpenAI’s company benefit.

“It’s fairly hilarious. I’ve seen individuals say, ‘Elon Musk is making an attempt to decelerate the competitors,'” he mentioned, including that Musk had no function in drafting the letter. “This shouldn’t be about one firm.”

RISKS NOW

Shiri Dori-Hacohen, an assistant professor on the University of Connecticut, advised Reuters she agreed with some factors within the letter, however took problem with the best way by which her work was cited.

She final 12 months co-authored a analysis paper arguing the widespread use of AI already posed critical dangers. Her analysis argued the present-day use of AI methods may affect decision-making in relation to local weather change, nuclear struggle, and different existential threats.

She mentioned: “AI doesn’t want to achieve human-level intelligence to exacerbate these dangers. “There are non-existential dangers which can be actually, actually necessary, however do not obtain the identical sort of Hollywood-level consideration.”

Asked to touch upon the criticism, FLI’s Tegmark mentioned each short-term and long-term dangers of AI must be taken critically. “If we cite somebody, it simply means we declare they’re endorsing that sentence. It doesn’t suggest they’re endorsing the letter, or we promote the whole lot they suppose,” he advised a information company.

Dan Hendrycks, director of the California-based Center for AI Safety, who was additionally cited within the letter, stood by its contents, telling Reuters it was smart to contemplate black swan occasions – these which seem unlikely, however would have devastating penalties.

The open letter additionally warned that generative AI instruments may very well be used to flood the web with “propaganda and untruth”. Dori-Hacohen mentioned it was “fairly wealthy” for Musk to have signed it, citing a reported rise in misinformation on Twitter following his acquisition of the platform, documented by civil society group Common Cause and others. Musk and Twitter didn’t instantly reply to requests for remark.